Beyond ASICs

“ASIC”-Application-Specific Integrated Circuits

The concept was that advanced IC tools and technologies such as gate arrays, standard cells, silicon compilers, and so on were to be given to system designers so they could design their own chips instead of using those boring “standard” chips. I say “was” because ASIC, like other over-hyped “concepts,” continues to evolve in search of commercial viability. After figuring out that ASIC is a design and manufacturing technology, not a product, promoters have switched away from system designers and correctly targeted IC houses.

There are some successes—mainly big business and government customers. Lately though, “ASIC” companies are introducing “standard” products as fast as they can. For example, VLSI Technology is making SRAMs and LSI Technology PC chip sets. The original ASIC concept-the designer creates his own chip manufactured by an outside foundry-has largely failed for two reasons.

First, the tools aren’t that great and designing chips still isn’t easy. True, brainstorming your own architecture ideas is nice, but then you have to deal with messy realities like your trillion-cycle-simulated proto IC doesn’t work and can’t be tested. Proponents argue that the bright future for ASIC is still there right around the corner, as soon as “better” (smarter, cheaper, faster) tools are available. But the real showstopper for the original ASIC concept doesn’t have to do with technology at all. Instead, it is the marketing “reality” that ASIC really means “custom” and all the risks associated therewith. For example… Design Risk-Make sure you budget time and money for the four or five mask revisions it will take to get a chip you can live with. Inventory Risk-Your Company’s ASIC-based “Talky Dick” politician doll went nowhere. Don’t worry: The 100,000 chips in your warehouse ought to make good tie tacks or fishing lures. Sole-Source Risk-The place making your chips burns down. Switching your design to another foundry may not be as quick and easy as you think. Maybe N.R.E. (‘Non-Recurring Engineering”) really means NeveRending Expense.

PLDs to the Rescue

Enter the PLDs-Programmable Logic Devices. Marketing-wise, the beauty of a field-programmable device is that it is a “standard” chip and thus eliminates the risks associated with “custom” ASlCs. Furthermore, low design and device cost make the technology accessible to small- and medium-size companies. PLDs are kind of like microprocessors except the programming language is hardware oriented-Boolean equations, schematics, state-machine diagram-instead of instructions. The modern PLD era started with the introduction of the PAL (Programmable Array Logic) chip by Monolithic Memories Inc. The PAL evolved from earlier generation PLDs-PROMS (Programmable Read-Only Memories) and PLAs (Programmable Logic Arrays), combining the best features of each. Using “fuse” technology, PALS were typically used by designers to consolidate glue logic-that miscellaneous TTL that inexorably creeps into every board design. PALs also served as “building blocks” in large systems. For an example, read how PALs saved the day at Data General in “The Soul Of A New Machine” by Tracy Kidder.

PALs languished at the start. MMI was a relatively small company pitching a novel concept to a sceptical audience of small-system designers. The first PAL chips themselves were relatively low density, slow, power hungry, expensive, and sole-sourced to boot. Meanwhile the original tools were on the order of truth-table and “minimize-it-yourself” boolean equation entry. However, PALs gradually started making inroads into a wide variety of designs. Ultimately, the PAL became more than the company could handle, and MMI was acquired by AMD. But by that time, the original MMI PAL patent license had been seeded to a wide variety of large and small semiconductor companies. Incidentally, I expect the original MMI (now AMD) PAL patent will be the subject of much litigation until it runs out-and probably after that too! The real excitement now is that next-generation PLD architectures are emerging featuring advanced processes and ever more powerful and easy-to-use tools. Let’s take a look at three new contenders.

New-age PLDs, LCAs, and FPGAs

The first evolutionary track improves various aspects of the original PAL with upgraded architecture and process. Typical features of these “second-generation” chips include:

Speed/Power: One reason MMI is no longer with us is that they stuck with their old bipolar process long after everyone else had moved on. Most new contenders use high-speed, low-power CMOS processes.

I/O Programmability: The original PAL line-up included dozens of parts, each offering a particular variation of number of inputs and outputs, output clocking and polarity, and so on. By making the chip a little “smarter,” manufacturers have come up with single devices that can be programmed to mimic many of the old versions. Obviously, dealing with just one part is much better for both supplier and customer.

Reusability: Instead of the permanent “fuses” of the original PAL, some suppliers offer EPROM- (Altera) or even EEPROM-based (Lattice) devices. Besides the obvious financial advantages for lab work, field up grades, and so on, erasability makes testing (by the manufacturer and customer) easier.

Application Specificity: A generic device is “tuned” for a certain niche application by the addition of a small amount of dedicated logic. Examples are devices that include a microprocessor bus interface or are optimized for state-machines.

The list of first- and second-generation PAL/PLD manufacturers in eludes almost all semiconductor companies, with the notable absence of the top Japanese suppliers. The first brand new beyond-PAL chip, the Xilinx LCA (Logic Cell Army) features two major innovations. First, the architecture, instead of being based on the PAL’s array of AND/OR gates, utilizes an array of higher-functionality logic cells and I/O blocks. Second, the programmability is in the form of RAM, so it is not only “erasable” like EEPROM, but is “dynamically reprogrammable.”

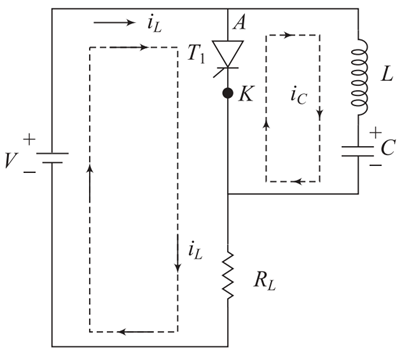

Upping the ante from AND/OR gates to logic cells (called CLBs for Configurable Logic Blocks) and I/O blocks (IOBs) allows an LCA to handle surprisingly complex logic: from 1200 equivalent gates (about the same as a PAL) to 9000 (about the same as a midrange gate array). These correspond to combinations of 64 CLBs/58 IOBs all the way to 320 CLBs/144 IOBs. A single CLB can perform combinatorial and clocked logic operations with multiple inputs and outputs. The IOBs allow inputs to be latched or direct, and outputs to be three-stated.

However, overall LCA performance depends on the cleverness of the routing scheme that connects signals between the cells; each “stage” of signal switching introduces delays. Xilinx uses a 3-level hierarchy of on-chip buses-adjacent, general purpose, and long lines-to optimize the trade-off between speed and flexibility. If your application is relatively simple and/or low performance, you won’t have to worry about routing. Otherwise, you must “tweak” your design to avoid critical path delays. Meanwhile, the fact that the LCA is based on RAM is both problem and potential. The problem is the LCA RAM needs to be “initialized” each time the system is powered on. Xilinx eases the pain as much as possible by offering various initialization schemes. For example, the LCA can override your pin definitions to implement a parallel bus at power-up which a host MPU can then use to access the LCA like any other peripheral chip. Alternatively, if an MPU isn’t present, a standard EPROM can be used to boot the LCA. Multi-LCA initialization is handled with a “daisy chain” mode in which initialization data moves along the chain from the first to the last LCA.

The potential of RAM-basing, underrated in my opinion, is “soft” hardware. Using one or more LCAs, you could design a system with functionality largely determined by code loaded at power-up. A neat by-product of such a design strategy is that hardware fixes might be as simple as shipping a new ROM or disk, instead of laying out a new circuit board!

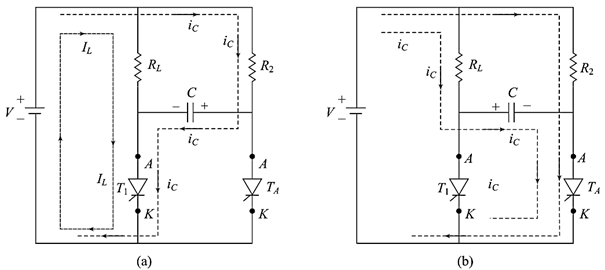

A similar PLD architecture is the FPGA (Field-Programmable Gate Array) by Actel. Like the LCA, the FPGA features logic and I/O modules which are programmably interconnected to determine the chip’s overall function. Thanks to the use of “anti- fuse” technology-the pre-programmed state is “open’‘-the FPGAs, though not erasable, are highly testable. Further, two pins can act as probes providing access to internal signals – a real plus for debugging. Using hundreds of thousands of anti-fuses, Actel features an automatic place-and-route scheme that is similar to that of a channelled gate array ASIC. The result is flexible interconnection and 85-95% gate utilization. FPGAs are available in densities from 1200 to 6000 gates. Noting that a typical TI’LMSI-equivalent function such as a decoder, counter, or shift register-only consumes about 50 gates gives you an idea of just how powerful FPGAs are.

Tools are Key

It’s been said that programmable logic without good programming tools is useless. The good news is that tools continue to improve and PLD development is easier than ever. Old and new PAL-type devices are now supported by popular third-party development tools running on PCs and workstations. Leading contenders, such as Data I/O’s ABEL and Logical Devices’ CUPL, allow you to enter, compile, and “bum” (program the PLD) your design. Add-ons bring schematic entry (supplementing the standard boolean equations), simulation, and automatic test vector generation to your bag of tricks. For a few thousand dollars, you can be the first on your block with a desktop “fab” which supports hundreds of types of low-cost PAL-type chips. Meanwhile, the new-architecture PLDs-such as those from Xilinx and Actel-are also supported by manufacturer-supplied tools which fully exploit their particular technology. These packages feature schematic entry with “macro cell” libraries which implement common SSI/MSI functions, and include the programming hardware needed to put your ideas into silicon.

latest video

news via inbox

Nulla turp dis cursus. Integer liberos euismod pretium faucibua